Load balancing plays a vital role in the lives of every e-business. High-traffic websites are constantly being asked to return the correct text, images, video, or application data in a fast and reliable manner while serving hundreds of thousands, if not millions, of concurrent user requests.

In this article we’ll explore the ins and outs of load balancing and how intelligent load balancing can take your overall website performance to the next level.

Load balancing explained

What is load balancing?

Load balancing is the process of efficiently distributing network traffic across multiple backend servers, known as a server farm or server pool, to deliver high speed and performance for customers.

The main purpose of load balancing is preventing server overload and maintaining a healthy amount of activity in each server.

The role of a load balancer is often compared to a traffic cop, sitting in front of your servers, routing client requests to the right location across all servers at any given moment, and preventing unforeseen incidents by making sure no one server is overworked.

The three main functions of a load balancer include:

- Distributing client requests or network load efficiently across multiple servers.

- Ensuring high availability and reliability by only sending requests to online servers.

- Providing the flexibility to add or subtract servers depending on the demand

To avoid degrading the user’s experience, when a new server is added to the server group, the load balancer automatically starts to send requests to it. If a single server ever goes down, the load balancer will redirect traffic to the remaining online servers.

The benefits of load balancing

1. Improve productivity by getting rid of downtime

One of the biggest advantages of load balancing is reducing downtime. Global businesses with different time zones need to implement load balancing so there are no work disruptions in any location. If a company is located in one place it’s easier to schedule maintenance because there is only one time zone. Companies utilize this and schedule downtime at non-working hours like early morning or the weekends.

Load balancing enables your company to shut off any server for maintenance and channel traffic to other serves without disrupting work hours. This way companies can reduce the downtime while maintaining uptime and improving performance.

2. Scalability due to better performance from servers to allow for increased traffic

The amount of traffic to a website has a direct effect on the performance of that site. Hence, if there is a sudden spike in traffic, a server may have difficulty handling the excess traffic, possibly causing the website to go down.

With load balancing the traffic is spread across multiple servers, allowing the entire system to handle the traffic more efficiently. Load balancing allows you to add or remove resources without causing any interruptions to incoming traffic. The server administrators can scale the web servers up or down depending on your website’s traffic fluctuations.

3. Redundancy due to the automatic transfer of traffic to available servers

Load balancing can significantly reduce the impact of hardware failure and improve your website’s overall uptime. If traffic is sent to two or more web servers and one server fails, the load balancer will automatically transfer the traffic to the other working servers. By maintaining multiple load-balanced servers, you can be assured that a working server will always be online to handle website traffic even when the hardware fails.

4. Flexibility during maintenance tasks

IT administrators can perform several maintenance tasks on web servers without impacting the website’s uptime, which provides great flexibility.

This staggered maintenance system, where at least one server is always available to pick up the workload while the other servers are undergoing maintenance, ensures that the website’s users do not experience any outages. All traffic can be directed to one server while the load balancer is in active/passive mode.

5. Efficiency thanks to the ability to avoid disruption of workload by detecting failures early on

Load balancing helps detect these failures early and minimize disruption of your servers or workload. With multiple data centers spread across a number of cloud providers, you can redistribute resources to other areas that are unaffected by bypassing detected failures.

Different types of load balancers

A load balancer may look like an actual physical device or run as software that is incorporated into a controller with a particular algorithm or algorithms.

Hardware-based load balancers are typically high-performance appliances, capable of securely processing multiple gigabits of traffic from various types of applications.

Benefits of hardware-based load balancers

- May contain built-in virtualization capabilities that consolidate numerous virtual load balancer instances on the same hardware.

- Includes Application Specific Integrated Circuits (ASICs) adapted for a particular use, allows high-speed promotion of network traffic, and frequently used for transport-level load balancing since it’s faster compared to software solutions.

- More flexible multi-tenant architectures and full isolation of tenants.

Disadvantages of hardware-based load balancers

- Relies on proprietary hardware housed in a data center.

- Requires a team of sophisticated IT personnel to install, tune, and maintain the system.

- Only large businesses with larger IT budgets can reap the benefits of improved performance and reliability.

- Doesn’t support cloud load balancing since cloud-based vendors don’t allow customer or proprietary hardware in their environment.

Software-based load balancers run on standard hardware, a desktop or PC, and standard operating systems.

Benefits of software-based load balancers

- Can fully replace load balancing hardware while delivering analogous functionality and superior flexibility.

- May run on common hypervisors, in containers or as Linux processes, with minimal overhead on bare-metal servers.

- Highly configurable depending on the use cases and technical requirements in question.

- Save space and reduce hardware expenditures.

- Can deliver the performance of hardware-based solutions at a much lower cost.

- Affordable for smaller companies.

- Ideal for cloud-based loading since they run in the cloud-like any other software application.

Various types of load balancing methods

Load balancers follow algorithms to determine how many requests are distributed across the server pool and where they go. Depending on a client’s specific needs, one can choose a different algorithm that is most appropriate for the respective scenario.

Different types of algorithms include:

Round robin – The group of servers distributes requests sequentially. This process relies on a rotating list that has the virtual server forward each client request to a different server based on the list. It’s easy to implement, but since it does not take into account the load that’s already on the server, the server may become overloaded with too many processor-intensive requests.

Least connection method – Virtual servers send client requests to the servers with the least number of active connections. This method performs better than Round Robin because it takes into account the current load on each server by looking at the relative computing capacity.

Least time method – This method is more sophisticated than the least connection method. It relies on the time taken by a server to respond to a health monitoring request. The method examines how loaded the server is and the overall expected user experience by looking at the speed of the response. It will also take into account the number of active connections on each server.

Hash method – Load balancers make decisions on a hash of various data from the incoming packet. The packet includes connection or header information, such as source or destination IP address, port number, and URL.

Random with two choices – This method picks two servers at random and sends the request to the one selected and then applies the Least Connections algorithm.

Least bandwidth method – This method looks for the server currently serving the least amount of traffic as measured in megabits per second (Mbps).

Least packets method – This method selects the service that has received the fewest packets in a given time period.

Custom load method – The load balancer queries the load on individual servers via SNMP. Administrators can define the server load of interest to query, including CPU usage, memory, and response time to combine their requests.

So… what is intelligent load balancing?

Multi CDN and load balancing

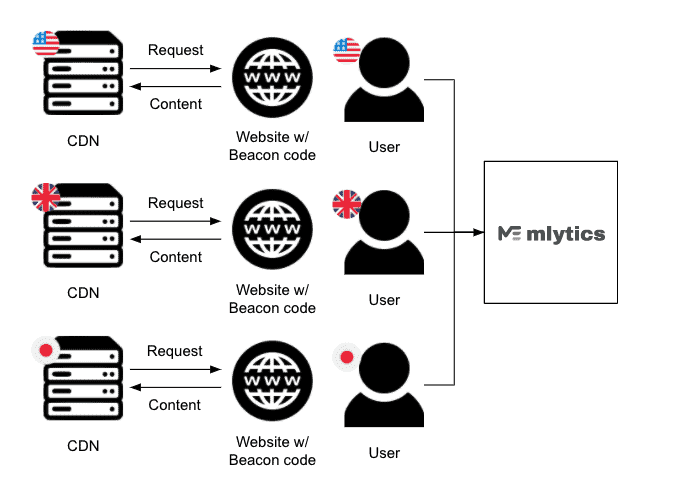

A proper combination of Multi CDN and intelligent load balancing can direct user requests to the best performing CDN, based on the user’s location. If multiple providers are in one region, the content is served from the fastest one.

Depending on the situation and scenario, there are different load-balancing methods to manage and operate multiple CDNs.

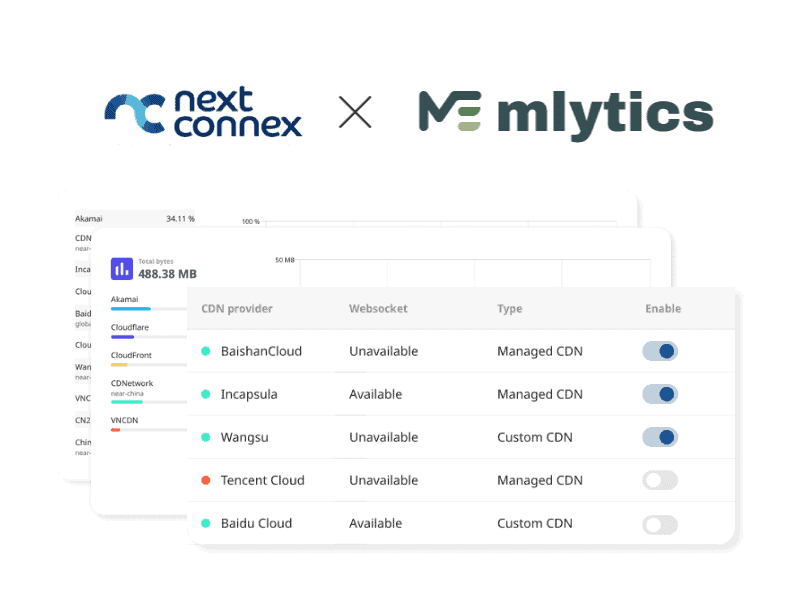

The Mlytics Multi CDN platform grants clients access to multiple top-tier CDNs, directly improving latency, uptimes, and cost efficiency on a global scale.

RUM/synthetic monitoring-driven load balancing

RUM and synthetic monitoring are two great ways to collect website performance data. In order to take advantage and use these data to drive load balancing decisions, it is imperative to have abundant data to fully trust the system to make accurate decisions.

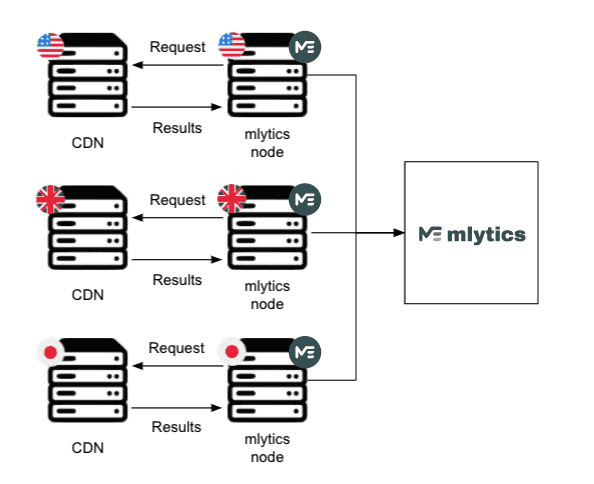

To be able to do this, Mlytics deployed hundreds of nodes around the world which are used for consistent synthetic monitoring. The nodes provide Mlytics the ability to collect CDN performance and availability data from various regions around the globe.

In addition to utilizing data gathered via synthetic monitoring, Mlytics is also leveraging RUM data. With RUM data, Mlytics gives customers the option to install a code snippet on their website to collect real user monitoring data, which is anonymous and privacy-protected.

Mlytics Smart Load Balancing then combines these two data sources to accurately reflect the actual performance status for all the CDNs available on the platform, anywhere around the world.

By constantly identifying and pointing traffic to the best-performing CDN based on the data collected via the hundreds of strategically placed nodes, customers around the world have been flawlessly improving their website performance and minimizing risk for outages and website downtimes.

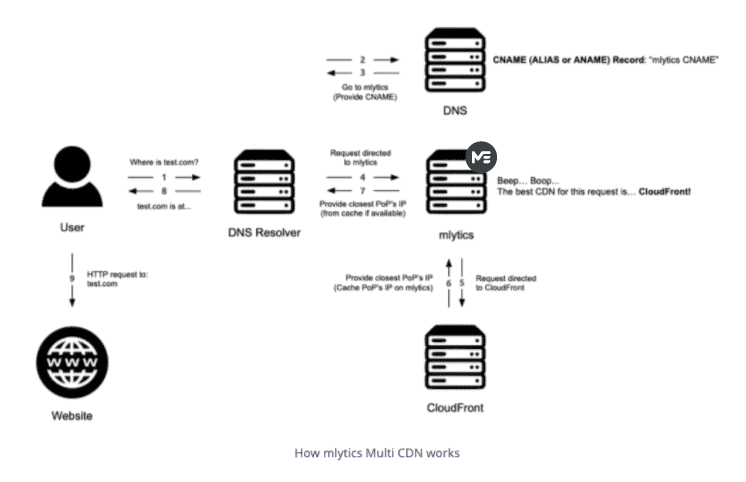

How Mlytics Smart Load Balancing works

- When a user visits a website, e.g. www.abc.com, a DNS lookup is triggered.

- The request is directed to the Mlytics system via the CNAME assigned by Mlytics. On condition that it’s properly pasted in the DNS record.

- The system measures user geolocation, network latency, and CDN performance.

- The system finds the best CDN for the user and routes the request to the designated CDN.

- The user can now access www.abc.com via the selected CDN.

It’s doing what it’s designed for…

Last year, on July 18th, Cloudflare’s backbone network experienced a configuration error. This caused an outage for about 27 minutes, directly affecting thousands of businesses around the world.

Many locations with heavy internet usage were affected, including areas on the east and west coast and central parts of the United States, all the way to the eastern coast of South America, and spanning across the ocean to multiple parts of Europe, including London, Frankfurt, Paris, Stockholm, Moscow, and St. Petersburg.

Fortunately, as Cloudflare is only one of the many CDNs available from the Mlytics marketplace, our load balancing solution was able to swap out Cloudflare with the next-best performing CDN (different for each region) to complete user requests.

The Smart Load Balancing optimization chart below demonstrates which CDNs were used (swapped over) during a certain timespan.

During this particular incident, Mlytics Smart Load Balancing solution selected Akamai as the CDN for the United States. This chart displays Akamai’s response to Cloudflare’s performance drop. Several query spikes in the states demonstrate that the load balancing system was swapping over to Akamai.

Additionally, the CDN performance chart below illustrates a latency spike and an availability drop for Cloudflare in the same time frame. This feature is called ‘Pulse’ and shows key information on latency and availability from all CDN providers.

Data of both charts combined clearly depicts how the load balancing system responded in the US by swapping over from the Cloudflare CDN to the available Akamai CDN for user requests.

Takeaways

Today e-business’s success and survival rely on their ability to deliver a fast and efficient web experience for their web visitors.

With internet traffic increasing and businesses going global, it is key that requests are distributed effectively to prevent slow loading times and downtimes. This is where Smart Load Balancing comes into play!